Description

Selling my 2 × NVIDIA RTX A5000 24GB professional workstation GPUs, including NVLink bridge.

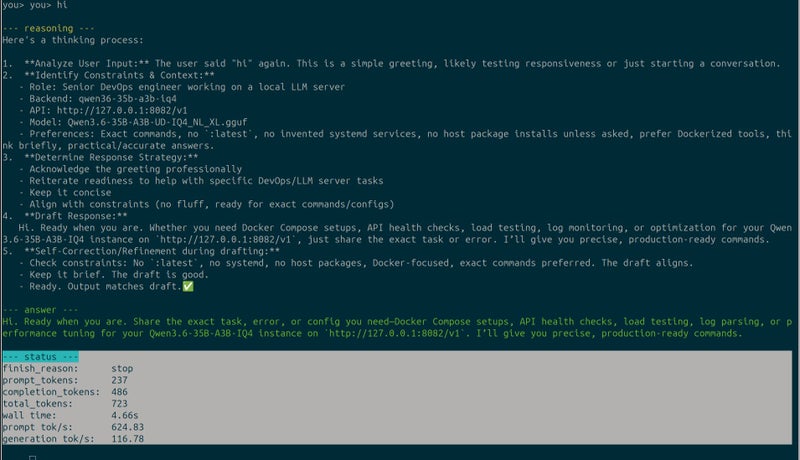

With Qwen 3.6 35B A3B GGUF, I have seen approximately 100–120 tokens/sec per card in favourable conditions. With both GPUs used in parallel, total throughput can be significantly higher, and in suitable multi-session or multi-agent workloads may approach roughly 200–240 tokens/sec combined.

Actual performance will vary depending on the exact model, quantisation, context length, prompt size, KV cache settings, batch settings, CPU/server configuration, and software stack.

These are strong cards for local LLM inference, AI agents, CUDA workloads, RAG systems, multi-GPU testing, and running multiple local AI sessions at the same time.

Please treat the numbers as real-world reference figures from my own setup, not guaranteed benchmarks for every system.

Each card has 24GB GDDR6 ECC memory, so together you get 48GB total VRAM across two GPUs. With NVLink-supported workloads, these are especially useful for professional rendering, simulation, AI, machine learning, 3D, CAD, Omniverse, CUDA, and multi-GPU workstation setups.

They are ideal for:

AI / local LLM inference CUDA development Blender / Octane / Redshift / V-Ray rendering NVIDIA Omniverse CAD / engineering workloads Video production and professional creative workflows Linux workstation or server GPU compute setups

These are much more power-efficient and stable than many consumer GPUs, especially for serious workstation or server use.

Included:

2 × RTX A5000 24GB GPUs 1 × NVLink bridge

Condition:

Near new, working well Pulled from my own workstation setup Selling only because I am upgrading Photos show the actual cards

Details

Shipping & pick-up options

| Destination & description | Price | |

|---|---|---|

| Free shipping within New Zealand | Free | |

| Pick-up available from Auckland City, Auckland | Free | |

Payment Options

Pay instantly by card and Ping balance.

Cash

Questions & Answers (2)

Added the proof for 1 gpu token speed. Seller comment Monday, 11 May 2026 [NVIDIA RTX 5000 Ada Generation (Current): 32GB VRAM. [NVIDIA RTX A5000 (Previous Gen): 24GB VRAM (Ampere architecture). please check which one is true. if it is 24gb model, so it is not ada generation cryptonz321 (7 • 9:48 am, Tue, 12 MayThis is the NVIDIA RTX A5000 24GB, Ampere generation, with 2-way NVLink support. The newer RTX 5000 Ada has 32GB VRAM and stronger newer-generation Tensor Cores, but it does not support NVLink. That means two RTX 5000 Ada cards cannot be directly bridged together for high-bandwidth GPU-to-GPU communication or pooled-memory workflows in the way two RTX A5000 cards can. appleplus (614 • Tuesday, 12 May 2026

2 × NVIDIA RTX A5000 (2x24GB) 48GB GPUs with NVLink Bridge

Am I covered by Buyer Protection?

When you make a purchase using any Ping payments like Card or Balance, or Afterpay we are able to protect your trade under our Buyer Protection policy, up to $5,000.

Learn more about Trade Me's Buyer Protection.